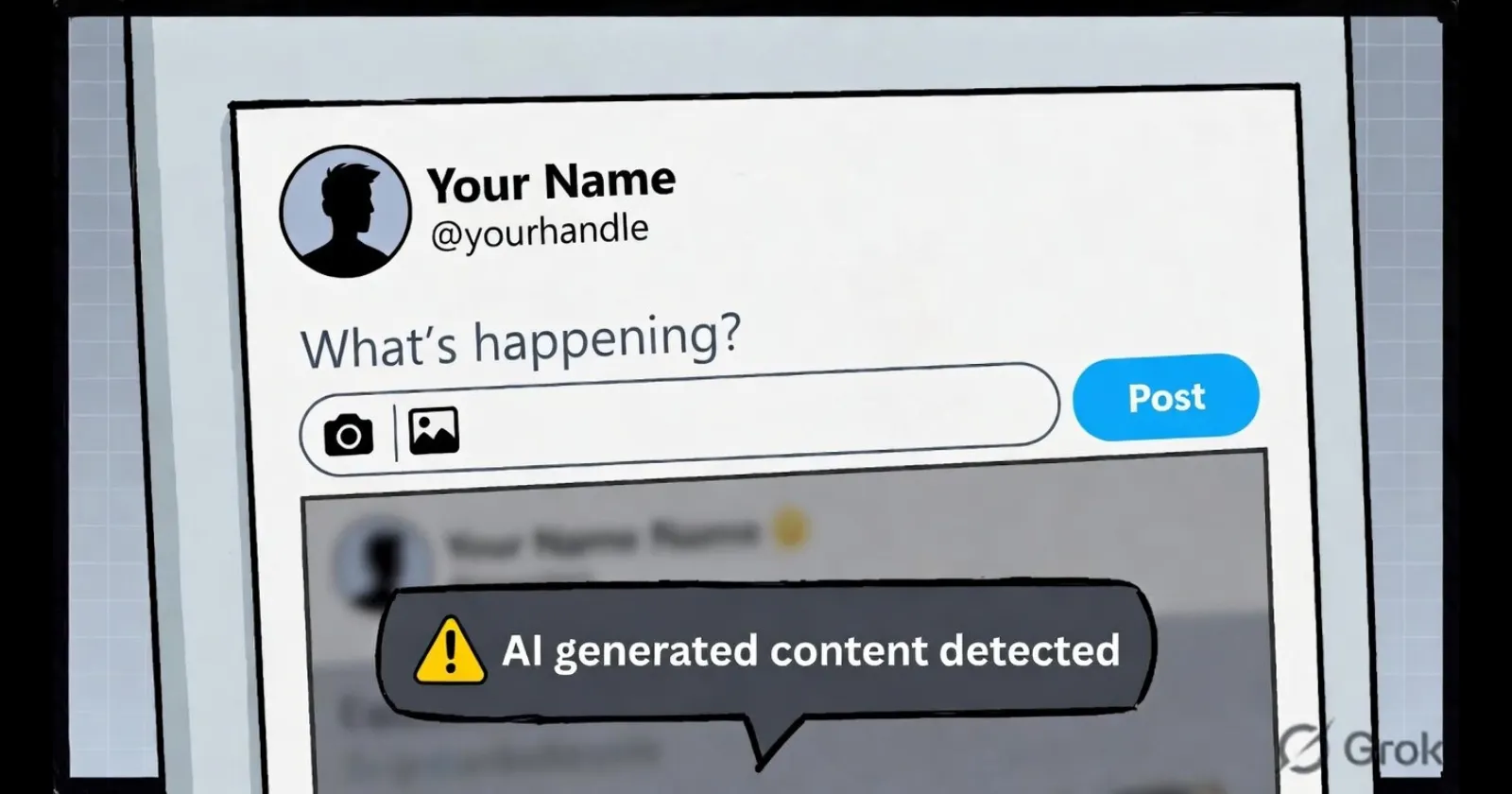

X, the social-media platform formerly known as Twitter, is quietly preparing to flag posts its algorithms suspect were produced by artificial-intelligence systems, according to code strings uncovered by reverse engineers. The discovery suggests users will soon see an on-screen prompt—appearing before publication—that warns “AI generated content detected” and encourages manual disclosure when machine-authored text is suspected.

The move, if rolled out broadly, would place X among the first major consumer platforms to intercept AI-generated material at the moment of composition rather than after the fact. It also signals Elon Musk’s network is seeking a middle path between the European Union’s hardening requirements for synthetic-media disclosure and advertisers’ demands that their messages not appear alongside potentially misleading bot-written posts.

Strings in the Android Bundle

Mobile researcher PiunikaWeb first located the relevant strings hidden inside a recent Android build of the X application. The fragments include a dialogue title—“AI generated content detected”—and explanatory text stating “This post appears to contain AI-generated content. Consider adding a label so people understand its origin.” Users would retain the option to dismiss the alert and publish unaltered, but the friction marks a notable shift from today’s laissez-faire environment.

Reverse engineer Alessandro Paluzzi, whose findings have previously pre-figured product launches, corroborated the discovery, posting screenshots that show the prompt appearing inside the composer window. X has yet to confirm the experiment publicly, and platform representatives did not respond to requests for comment.

Automated Detection Meets Human Judgement

Current platform policies already ban misleading manipulated media, yet enforcement relies heavily on user reports or post-hoc takedowns. By attempting real-time classification, X would move upstream, nudging creators toward transparency before virality sets in. The technical challenge is formidable: large-language-model outputs can mimic human writing styles with uncanny accuracy, and detectors suffer from both false positives and an inability to keep pace with model improvements.

Dr. Soheil Feizi, a computer-science professor at the University of Maryland, cautioned that “even state-of-the-art detectors can be evaded with simple prompting tricks.” His 2023 study found reliability drops sharply once adversarial users paraphrase AI text. “Platforms need to treat detection scores as signals, not verdicts,” Feizi said.

X’s apparent approach—suggestive rather than prohibitive—mirrors the softer “nudge” philosophy popularised by behavioural economists: preserve user agency, but make the ethical choice the path of least resistance. Whether that proves sufficient for regulators remains uncertain. The EU’s pending AI Act will require platforms to label synthetic audio, video, or text deemed “deepfakes,” with non-compliance fines reaching 7 % of global turnover.

Advertiser Pressure and Political Risk

Beyond regulatory optics, X has a commercial incentive to reassure marketers who fled last year after a surge in controversial content. Media measurement giant DoubleVerify reported in January that brand-safety scores improved on platforms that apply visible AI labels, suggesting marketers pay a CPM premium for clean environments. X’s ad revenue remains down roughly 40 % year-over-year, according to third-party estimates, intensifying pressure to demonstrate proactive moderation.

Political risk also looms large. With more than 50 national elections scheduled in 2026, governments fear generative AI could swamp voters with fabricated endorsements or synthetic scandal. The U.S. Federal Election Commission is weighing stricter disclosure rules, while Australia’s eSafety commissioner has already issued notices to tech firms requiring “watermarking or labelling of AI outputs.”

Industry Precedents and Technical Standards

Other networks have taken divergent tacks. TikTok in March began mandating creators disclose AI-generated material, threatening takedowns for non-compliance. Instagram parent Meta has floated embedding invisible watermarks in images produced by its own generative tools, though enforcement for third-party content remains limited. YouTube pledges to display labels on “realistic” synthetic videos but depends largely on uploaders’ self-attestation.

Standards bodies are racing to catch up. Adobe and the BBC are backing Content Authenticity Initiative metadata, while Microsoft and Intel promote watermarking at the processor level. Yet fragmentation persists, and no single specification dominates. X’s reliance on in-app warnings rather than embedded metadata may reflect that uncertainty.

Privacy and Competition Concerns

Some civil-society groups warn that aggressive AI detection could chill legitimate anonymity, particularly for activists in repressive regimes. “If a dissident uses AI translation tools to obscure their writing style, being forced to label the output could increase surveillance risk,” said Natali Helberger, professor of law and digital technology at the University of Amsterdam. She advocates allowing pseudonymous accounts to opt out of public labels while privately sharing metadata with regulators.

Competitive dynamics also surface. By positioning itself as a gatekeeper, X could obtain early insight into emerging generative models—data that might feed its own AI ambitions. Elon Musk co-founded xAI, whose Grok chatbot competes with offerings from OpenAI and Anthropic. Access to large-scale, real-time examples of evasive AI output could refine detectors that later appear in xAI products.

What Happens Next

X has a history of public experimentation, releasing features to small cohorts before global rollout or quiet retraction. Code strings do not guarantee product launch, yet the specificity of the interface language suggests development is advanced. App researcher Paluzzi notes the prompt includes a “Learn more” hyperlink, implying support pages are also drafted.

If deployed, the feature is likely to start with English-language markets, leveraging probability-based classifiers trained on open-source corpora. Success metrics could include label adoption rates, user appeals, and downstream moderation queues. Early feedback will be scrutinised not only by regulators but by competing networks weighing similar interventions.

For creators, the prospect of real-time AI flagging adds another layer of editorial vigilance. Freelance journalist Rebecca Quin expressed mixed feelings: “On one hand, transparency matters; on the other, automated systems often misclassify non-native English as synthetic. I’d rather see investment in provenance infrastructure than pop-up prompts.”

Whether X’s nudge proves a template for the industry or a temporary experiment will become clearer in the coming months. As generative models proliferate, the line between human and machine speech blurs ever further, and platforms that fail to confront the ambiguity risk ceding narrative control to those who exploit it.

0 Comments